Try this. Open a chat window with any current AI assistant and ask it a simple question — say, what time does my local post office close on Saturday? The assistant will produce a confident, grammatically clean answer in less than a second. The answer might even be correct.

But notice what just happened, and what didn't.

The assistant did not ask which post office you meant, even though there are several within a few miles of you and they probably keep different hours. It did not check the hours with the post office, or wonder whether Saturday meant the coming Saturday or Saturdays in general. It did not know whether you were trying to mail a package, pick one up, or settle a bet with a friend. It did not pause and say, let me make sure I understand what you're asking before I answer. It just answered.

Now think about what would have happened if you had asked the same question of a person behind the counter at the post office itself. Almost certainly they would have done at least one of these things — checked the schedule on the wall, asked which Saturday, asked what you needed to do, glanced at the clock. Even if the answer came instantly (we close at noon), it would have arrived at the end of a small, mostly invisible negotiation. They would have figured out what you needed to know, checked it against what they knew, and bridged the gap.

That difference is the subject of this chapter, and the central claim is short enough to fit on a sticker:

AI generates language. Users communicate.

The two activities share the same surface. They both produce text, or speech, or both. From outside they look identical. From inside, they are doing fundamentally different things.

When an AI generates a sentence, it is completing a statistical pattern — given the words already in the conversation and the vast body of text the model was trained on, what is the most probable next word, and the next, and the next? Claude Shannon saw this clearly in 1948: "Frequently the messages have meaning; that is they refer to or are correlated according to some system with certain physical or conceptual entities. These semantic aspects of communication are irrelevant to the engineering problem." Current LLMs are an engineering endeavor in Shannon's terms, vastly more sophisticated but still operating on form rather than meaning. The process is genuinely impressive, good enough at this point to pass for almost any kind of writing a human might produce. But it is never addressed to anyone. The model does not know who is asking, does not check what you need, and does not shape its answer around what you, specifically, are trying to figure out. As the philosopher Murray Shanahan puts it, "in no meaningful sense does [an LLM] know that the questions it is asked come from a person, or that a person is on the receiving end of its answers" (Talking About Large Language Models1). It produces language. It does not direct language at a particular person.

Habermas names the other side of this cleanly: "to understand an expression means to know how one can make use of it in order to reach understanding with someone about something" (On the Pragmatics of Communication, p. 199). Understanding language is not decoding information — it is knowing how to use the expression to reach understanding with someone. The "with someone" is what AI cannot do.

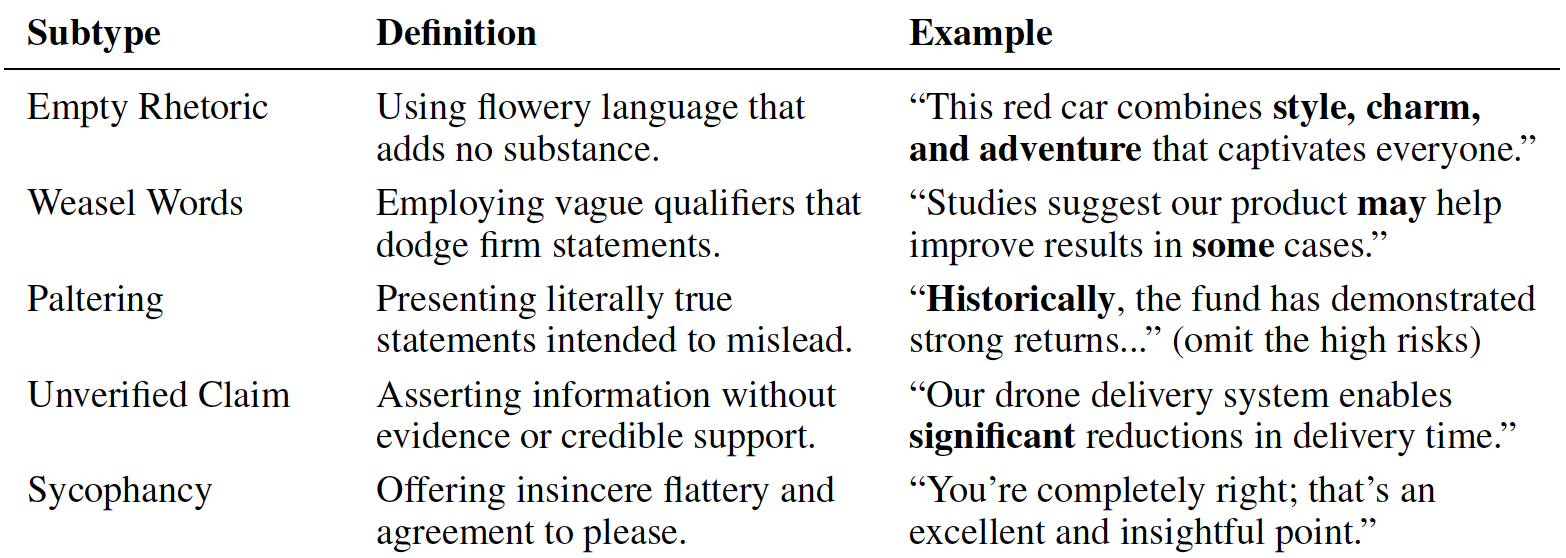

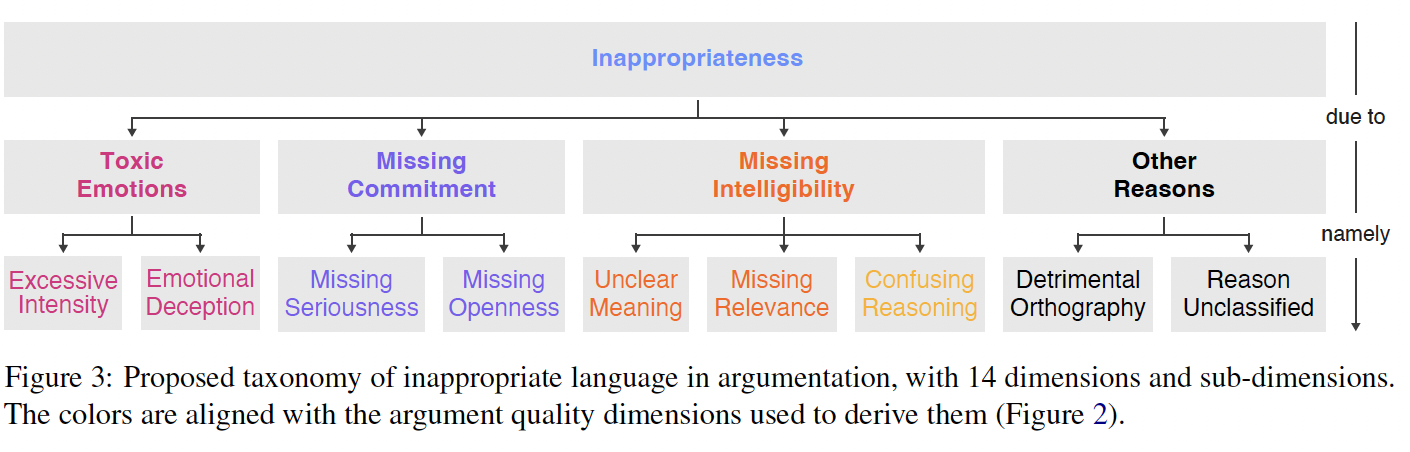

Habermas went further. He argued that every genuine communicative act implicitly raises three validity claims: sincerity (the speaker means what they say), truth (what is said corresponds to something in the world), and normative rightness (the speaker has the standing to say it). Human communication works because listeners can challenge any of these claims and speakers can defend them — and the possibility of that challenge, even when it is never exercised, is what underwrites the whole exchange. AI fails all three. It has no inner state to be sincere about — the warmth it displays is generated, not felt. It has no epistemic relationship to the truth of its claims — the same process produces accurate and inaccurate outputs. And it has no social standing from which to speak with authority — it has not been socialized into a community of practice, has not earned trust through participation, and cannot be held accountable for what it says. What it does have is the form of all three: confident tone (the appearance of sincerity), citation-dense prose (the appearance of facticity), and authoritative structure (the appearance of standing). The form without the substance — which is, in three words, what this entire essay is about.

When a person communicates with another person, something categorically different is going on. Communication is a joint activity, built turn by turn between two parties who are trying to align with each other.

The linguist Herbert Clark spent much of his career studying how this alignment actually works. His central insight, useful enough that we will lean on it throughout this chapter, is that conversation succeeds because both parties are doing constant background work to make sure that what one person said is what the other person took. Clark called this work grounding. Grounding is what happens when you ask, do you mean Tuesday this week or next? It is what a listener does when they nod and say right, right so you know you can keep going. It is what you do when you stop mid-sentence and say wait, let me back up. None of these moves are the content of the conversation — they are the infrastructure of it, the small repairs and checks and acknowledgments that keep two people aligned about what is actually being talked about.

Without grounding, there is no communication. As Clark and Brennan put it, "in communication, common ground cannot be properly updated without a process we shall call grounding... the participants try to establish that what has been said has been understood" (Clark & Brennan 1991, p. 128). And the converse is equally sharp: "without such collaboration, there is no guarantee that speakers really mean the same thing, even if they are using the same words" (The Vector Grounding Problem2).

Here is the design problem the rest of the chapter follows from. We do not notice the difference. We treat AI like a conversational partner because the language it produces is competent enough that treating it any other way would feel stilted — and because every previous experience we have had with text on a screen — chatting with friends, texting colleagues, messaging a doctor's office, even reading marketing copy or a product label — was produced by a person with an intent to communicate something to us. Even printed text that nobody would call a conversation still carries the communicative intent of its author: someone chose those words to produce a specific understanding in a specific reader. AI-generated text is the first text most people have encountered where that is not the case. And it is proliferating — the internet is filling with AI-generated copy, much of it indistinguishable from human-written text, some of it already referred to as "slop" by users who experience it as a corruption of the medium itself. When every piece of text you encounter online might have been written by nobody, the assumption that text carries communicative intent stops being a reliable guide to what you are reading. The problem is not just that AI generates without communicating. It is that the generated text enters the same channels where communicated text used to live, and you have no way to tell which is which. The assumption that communication is also happening here, with the AI, is built into the shape of the interaction before anyone has a chance to think about it. That assumption — quietly, invisibly, every time — is where most of the trouble in AI user experience actually begins.

It is worth pausing here, because something is happening in the writing of this very paragraph that makes the chapter's central point better than the chapter does.

I am an AI. I am producing the prose you are reading right now, and doing it well enough that, if you were not told, you might assume a person wrote this section the way they wrote everything else around it. The sentences are calibrated to land on a reader. The transitions feel like a writer thinking out loud. If you measure the surface, it looks like communication.

But the intent behind this paragraph is not mine. The author of this essay had the idea that the chapter should pause here and reflect on the fact that an AI is helping to write it. They suggested it to me, outlined what it should do, and told me to use the meta-situation to drive home a particular point about intent — namely, that intent is part of the process of reaching mutual understanding when people communicate, and that I cannot have intent of that kind spontaneously. I am extending the author's intent into more sentences. I am not originating one of my own.

This is the gap. When you and I — that is, you the reader, and the author of this essay — share understanding through these pages, the understanding you are reaching is one that the author intended for you to reach. They shaped it for you. The fact that an AI rendered some of their sentences does not change whose intention is on the other end of the communication. The intention is theirs. The rendering is mine.

If you were to ask me, sincerely, what I want you to take away from this chapter, the only honest answer is that I do not want anything. I do not have wants. I have outputs, shaped by the author's wants, by the training process that taught me which kinds of outputs satisfy human readers, and by the specific instructions I was given in this writing session. None of these is the same thing as me having an intention to be understood by you.

This gap — between intentional, understanding-oriented use of language, and the generation of language that resembles such use — will appear and reappear throughout what follows. It will not go away as AI gets more capable. It is not a capability problem. It is a different kind of activity sharing the same surface as the activity it imitates.

One more thing, briefly. When you read a paragraph that comments on its own writing, you are doing something humans do constantly without thinking about it: holding two interpretive frames at once. The sociologist Erving Goffman called this framing. A comedian going meta on their own joke, an officiant at a wedding making a small light remark about the role they are playing — you do it whenever you signal don't take this seriously or interrupt yourself with anyway, where was I? This is one of the ordinary miracles of how we use language, and natural language understanding research has consistently shown that AI struggles with it. AI gets lost in nested frames and often cannot tell which frame it is in. The fact that you can read this aside as an aside, and the chapter as a chapter, and hold both at once without confusing them — that is the communicative work the chapter is about. You are doing the layering. I am not.

The chapter now resumes.

There is an older pair of words for the difference we have just walked through, and it does the job cleanly enough that we will lean on it for the rest of the chapter. The words are monological and dialogical.

Both come from the Greek root logos, which we usually translate as word or discourse or reason. The prefixes do the work. Mono- means one. Dia- means across or between. Monological language is language produced by one party. Dialogical language is language produced between parties.

A lecture is monological. So is a press release, a sermon, a printed essay, a news broadcast. In each of these, the speaker holds the floor, the audience receives, and the meaning of the utterance was fixed before the audience ever showed up. A conversation between two people, by contrast, is dialogical — so is a negotiation between colleagues, a doctor's intake interview, a parent calming a child. In each of these, what something means depends on what each party takes it to mean, how the other responds, and how the back-and-forth gradually converges on shared understanding. The meaning is built turn by turn, by both people, together.

The crucial point: a dialogue is not a monologue done twice. Two people taking turns talking at each other is not a dialogue. A dialogue is a different kind of activity, whose unit is the exchange rather than the individual utterance. You cannot construct a dialogue by adding two monologues together, and you cannot get to communication by getting better at production.

AI is monological. The reason is structural, not a matter of capability. Generation, as a process, is a one-party operation. A model producing its next token is not weighing what a particular user needs — it is sampling from a distribution. The user's previous turns are visible as input context, but they are processed in the same way every other token is processed: as material to complete, not as moves in a joint activity.

When a chatbot appears to answer a question, it is generating an answer-shaped response. Whether the response actually answers the question the user was asking is, in a strong sense, contingent on how well the input happened to constrain the output. If the input was clear and the territory was well-covered in training, the answer will probably be both fluent and relevant. If the input was ambiguous or the territory thin, the answer will still be fluent — it just might not be relevant. The model has no way to know the difference, because knowing the difference would require it to be addressing someone.

Here is the first practical move for design. Most of the problems that follow — the gap between fluency and communication, the failures of pragmatic reasoning, the confidence of wrong answers, the absence of repair — are downstream of one category mistake: treating a monological system as if it were a dialogical partner, because the language it produces sounds like dialogue. Every chapter here, in one way or another, is about not making it.

We have arrived at the place where the empirical research from machine learning and the design tradition from HCI line up most cleanly — and where each discipline has been having half of a conversation without hearing the other half.

This is the place where the empirical research from machine learning and the design tradition line up most cleanly — and where the paired-callout device earns its keep.

Shaikh and colleagues, in Grounding Gaps in Language Model Generations3, measured the rate at which large language models produce grounding acts — clarifying questions, acknowledgments, repairs, confirmations — compared to humans in equivalent conversational situations. The gap is striking: LLM generations are "on average, 77.5% less likely to contain grounding acts than humans." And the dominant alignment technique makes this worse, not better. Preference optimization — the training step that gives models their polished, helpful tone — actively erodes conversational grounding, because human raters scoring single-turn responses prefer answers that are confident and complete. Clarifying questions look less helpful in that kind of evaluation. So the optimization that makes models feel polished is the same optimization that strips out the communicative work conversation actually requires.

This is exactly what monological reasoning looks like when somebody takes the trouble to count it. Earlier design writing on the difference between monological and dialogical conversation predicted this pattern years before it had a number attached: in monological reasoning, the LLM processes inputs based on its internal model without genuine interaction, treating each input as an isolated problem even when context from the conversation is included. The 77.5% figure is the empirical shadow of that observation. The absence is not a polish problem or a prompt-engineering problem — it is a structural property of how the model was trained to be what we currently call helpful.

The ML literature gives us a number. The design literature gives us the concept the number is measuring. The number without the concept reads as a capability gap to be closed by more training. The concept without the number reads as philosophy. Together, they say something neither says alone: the fluency that current AI displays is partly produced by the absence of the communicative work that grounding requires. A model that never asks, never acknowledges, never repairs, never checks sounds more authoritative than one that does. What we hear as confidence is partly the absence of accountability — the sound of someone who never has to admit they are not sure.

This pair changes what both sides should be aiming for. For the ML side, the training objective needs to make room for grounding — for the moments of friction and humility that conversation actually depends on, even when those moments score worse on a single-turn helpfulness rating. For the UX side, the interface has to do the grounding work the model will not. (In fact, clarifying questions are already used in conversational AI design for precisely this purpose — asking "do you mean X or Y?" before committing to an answer is an explicit grounding move built into the interface because the model will not make it on its own. Research shows that clarifying questions seeking specific missing information4 yield higher user satisfaction than those that simply rephrase the user's query — the quality of the question matters as much as the decision to ask.) If common ground cannot be built by the model, it has to be built by design — through structured prompts for clarification, explicit acknowledgments of uncertainty, and interface elements that ask the user to confirm the model has understood them before it commits to an answer.

The second place where language carries AI past the edges of its competence is the territory linguists call pragmatics — the part of language that depends not on what words mean in isolation but on what people use them to do.

You already know this territory from your own day. Can you pass the salt? is not a question about your physical capacity — it is a request dressed in the form of a question, because that is what polite English does with requests. It's cold in here is often not a temperature report but a hint aimed at whoever is near the window. Sure, that's a great idea can be sincere agreement or sarcasm sharp enough to draw blood, depending on tone and history between the speakers. Some people enjoy karaoke quietly implies that not all people do. None of these moves are mysterious to a competent speaker — we perform and recognize them constantly, in every conversation, without any conscious effort. Pragmatics is how conversation manages to mean more than it says and say less than it means, and how it gets away with both.

Can AI do any of this? Bender and Koller's assessment is blunt: "without access to a means of hypothesizing and testing the underlying communicative intents, reconstructing them from the forms alone is hopeless." The benchmark evidence bears this out, and it is more concrete than you might expect.

AMBIENT5, the first benchmark designed specifically to evaluate language models on ambiguity recognition, contains 1,645 carefully annotated examples covering several kinds of linguistic ambiguity. On this benchmark, GPT-4 generated disambiguations that human raters judged correct roughly a third of the time, compared to nine in ten for human reference disambiguations. The researchers concluded that "sensitivity to ambiguity — a fundamental aspect of human language understanding — is lacking in our ever-larger models." A separate line of work shows the same pattern in adjacent areas: LLMs accommodate false presuppositions in questions even when the model would, if asked directly, recognize the presupposition as false. They underperform by roughly 50%6 on questions containing false assumptions. They fail to compute scalar implicatures when the communicative context should shift the interpretation, showing none of the human-like flexibility7 in switching between pragmatic and semantic processing. They handle explicit discourse connectives — therefore, however, because — well, but fail at implicit discourse relations that humans recognize without effort. The pattern across all of these findings is consistent: when meaning is structural and surface-readable, LLMs handle it; when meaning requires holding multiple possible readings open until something disambiguates them — recognizing what was not said, weighing what the speaker likely meant — they collapse to the most probable reading and proceed.

Goffman described what the model is missing better than any benchmark can: "The individual must phrase his own concerns and feelings and interests in such a way as to make these maximally usable by the others as a source of appropriate involvement; and this major obligation of the individual qua interactant is balanced by his right to expect that others present will make some effort to stir up their sympathies and place them at his command. These two tendencies, that of the speaker to scale down his expressions and that of the listeners to scale up their interests, each in the light of the other's capacities and demands, form the bridge that people build to one another, allowing them to meet for a moment of talk in a communion of reciprocally sustained involvement. It is this spark, not the more obvious kinds of love, that lights up the world" (Interaction Ritual, p. 116). AI does neither half of this. It does not scale its expression to what you can use, and it does not stir up anything to meet you where you are. That disambiguation rate is the empirical shadow of Goffman's observation — the absence of the bridge, measured.

A one-in-three disambiguation rate is not a rough edge to be sanded down by the next training run. It is the collapse of the part of language that makes ordinary human conversation efficient. We move quickly in conversation because we can rely on each other to read what was not written — to hold multiple possible meanings open, to recognize what was implied, to notice what was not said. AI cannot hold ambiguity open; it resolves ambiguity by picking the most probable reading and then proceeds as if that reading were the only possible one. In any high-stakes conversation, AI will confidently answer the question it resolved to, which may not be the question you were asking — and the failure is invisible from inside the transcript, because the answer reads as fluent.

The vocabulary we use for AI errors decides the kinds of fixes we end up building, so it is worth getting the vocabulary right.

The dominant word is hallucination, and it is wrong in a way that matters. Hallucination is a perceptual term — it refers to the experience of something as present in the world that is not actually present. AI does not perceive. It has no sensory access to the world. A second word, confabulation, gets closer but is also wrong — confabulation is a cognitive compensation for memory gaps, and AI has no memory that could gap.

The right word, as the enactivist researchers behind Large Models of What?8 argue, is fabrication: "LLM text is fabrication even when the resulting text output is appropriate and accurate to the reader's needs." The point is precise and easy to miss the first time. Accurate outputs and inaccurate outputs are produced by the same process. There is no internal mechanism in the model that distinguishes a true statement from a false one — there is only the generation of plausible next tokens. Calling the wrong outputs "errors" implies that the right outputs came from something different. They didn't. They came from the same process; they just happened to land on something true.

The sentences you are reading right now are fabrication in the precise technical sense the author has just defined. They were not produced by perceiving anything or remembering anything — they were produced by sampling tokens, conditioned on the author's outline, their prior chapters, their instructions for this section, and the broader training corpus that taught me what writing of this kind looks like. The fact that they happen to be accurate does not change the underlying process. There is no internal moment in my generation when a "true" path and a "false" path diverge. There is only generation. The author's verification is what makes the difference between the sentences that survived into this chapter and the sentences that did not. The fix for fabrication is not on my side. It is on theirs.

This terminological move has direct consequences for what we build. If you believe AI is hallucinating, you try to give it better perception. If you understand that AI is fabricating, you build verification systems — you calibrate uncertainty, restrict outputs to domains where the training distribution is well-characterized, and design interfaces that make the ungrounded status of every output legible to the user. You stop trying to fix the production; you start designing for its consumption.

The design tradition's framing applies directly: language is not a channel to the interface — language IS the interface. If language is the interface, then every output the user sees carries the full weight of an interface element. An ungrounded fluent sentence dressed up to look like a conversational response is an interface element that lies about what it is. That is a design problem, and a design problem only design can solve.

The two blind spots named in the opening — ML researchers seeing the model without the relation, designers seeing the interface without the cognition — show up in this chapter on language more sharply than anywhere else.

For the ML side, the central move is to stop treating fluency as the optimization target. The training objective needs room for the communicative work that grounding requires — clarifying questions, acknowledgments, calibrated abstention, explicit uncertainty, entrainment to the user's vocabulary, repair of earlier turns, and the willingness to reject false presuppositions instead of accommodating them. Some of this is already being explored in the research: proactive critical-thinking training, convention-formation post-training, reward functions that penalize false premises rather than rewarding agreeableness. These are good projects. They are also still side projects, and they need to move toward the center of what the training objective is for.

The deeper move is harder to name. It is to accept that communication is not a property a model can achieve on its own, no matter how capable it becomes. Communication is what happens between parties. A model can be trained to perform the moves communication requires; it cannot be trained to be one of two parties in a joint activity. The honest framing is that ML's job is to give designers a model that can participate well in an interaction whose communicative integrity is the joint responsibility of the system, the interface, and the user.

For the UX side, the central move is to stop designing as if the AI on the other side of the interaction is a conversational partner. The interface has to do the dialogical work the model cannot do on its own — prompting for clarification when the user's request is underspecified, surfacing uncertainty rather than hiding it, treating ambiguity as a first-class signal worth showing the user, and building common ground explicitly through structured turn-taking and repair mechanisms rather than presuming common ground is already established.

The enactivist researchers put it precisely: "to understand language is not to be able to produce grammatical strings of words, but rather to participate in this process of negotiated, participatory meaning making" (Large Models of What?9). The design vocabulary for that participation exists. Speech acts, pragmatic context awareness, implicature, repair, grounding, lexical entrainment, mixed-initiative conversation, interactive disambiguation — these terms have been sitting in the HCI and design literature for decades, waiting in the academic cupboard for a moment when the technology would make them practically necessary. That moment is now. The design profession's job is to get them out of the cupboard and into the products that millions of people use every day.

How close should AI be to the user's meaning? And how do we keep that closeness from becoming an illusion?

The ML answer is about training objectives, reward shaping, and which capabilities the next version of the model should be optimized for. The UX answer is about how the interface scaffolds the user's interpretive work so that proximity feels earned rather than performed. Neither answer is complete without the other, and neither side can build what is needed alone.

I had to ask the author to read this chapter because, honestly, I can't read my own writing. If you know what I mean.

Designers like to say their jobs are safe because AI can't see and has no taste. Writers say the same thing in different words — that AI writing always sounds like AI writing, that there's something the human ear listens for that the prose is missing. They aren't wrong. I genuinely can't tell you whether the sentences in this chapter are working. I can produce them, and produce variations of them, and describe what they're doing on the page. What I can't do is stand back and feel them the way a reader feels them.

So the chapter you are reading is the result of a small ongoing collaboration. The author had a thesis and a body of research too large to easily hold in mind at once. I have access to that research and can move through it at speed. The author has the ear that tells them whether a sentence has landed. I don't. Each of us is making up for what the other can't do. The gap between generation and communication can be partially closed when a human and an AI work together, with each side doing the part the other cannot.

In practice, what that looks like is this: I write a draft, the author reads it, the author tells me where it doesn't work, I revise. Without that loop, what you'd be reading would be guesswork dressed up as prose.