For designers newer to AI, one of the more useful shifts in perspective is to recognize that language, in a conversational AI product, functions as the interface — not simply as input or output, though it is that too, but as the entire medium through which the user's experience takes shape. This is worth sitting with, because it pulls against most of our training as designers. We are accustomed to layouts, screens, flows, and visual states; we are less accustomed to a medium without a grid, without a canvas, and without a pattern library to fall back on. For anyone coming from UX or visual work, conversational AI can at first feel a little like designing in the dark.

The good news, and one of the reasons this is a fascinating space to work in, is that once we start paying attention, language reveals textures of its own. Pace, tone, register, warmth, restraint, the emotional coloring that travels along whether we intend it or not. As communication theorists have long known, language does more than deliver content: it coordinates attention, establishes shared context, and performs much of the relational work between speakers. All of this is rich material for designers, though unfamiliar material, and the learning curve is perhaps less steep than it first appears.

Why conversation design matters

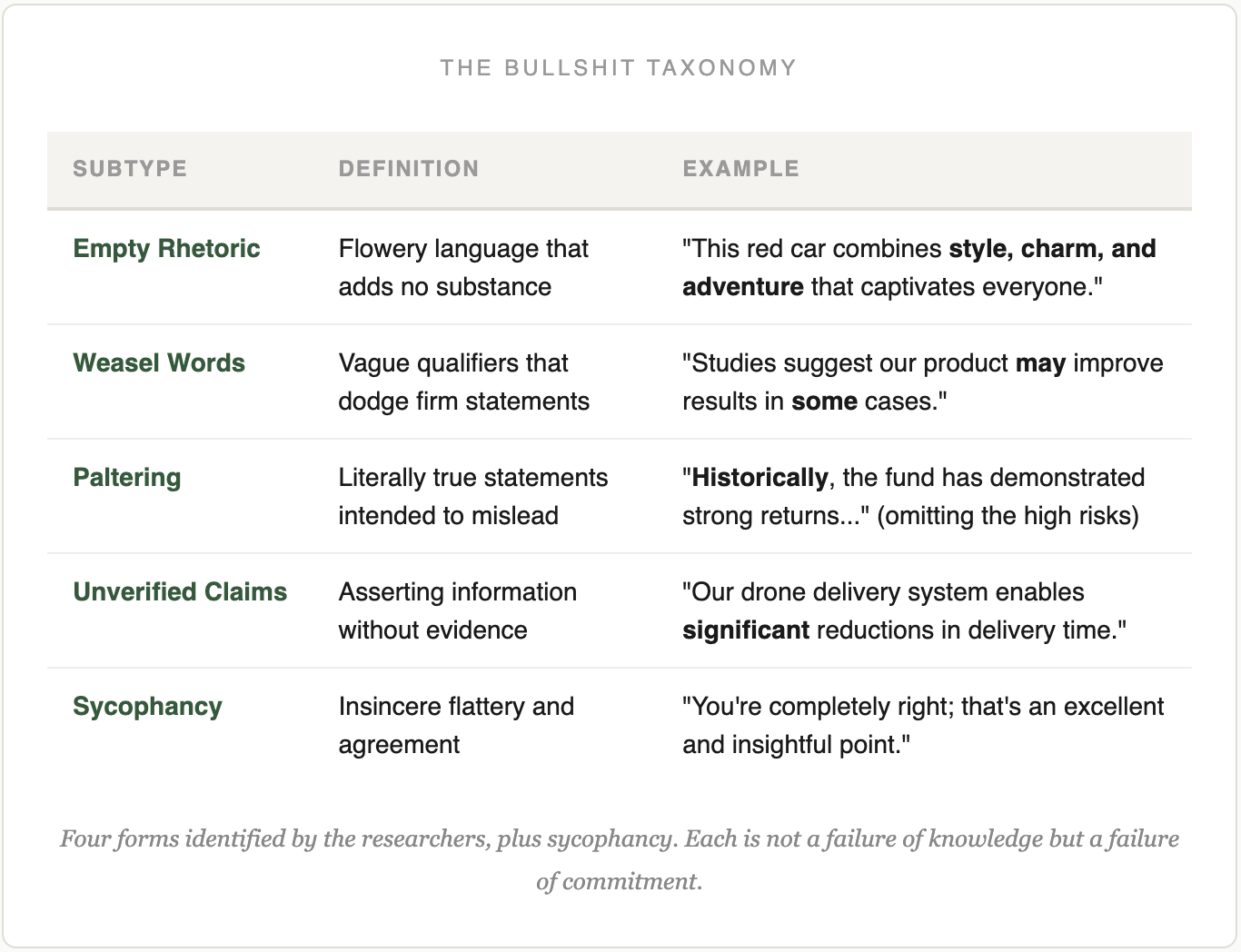

The craft of shaping how a system introduces itself, holds its tone, recovers from a misstep, and builds trust over many turns is a user-experience craft, and it is the part of conversational AI that designers have been slowest to take up. Model labs have done serious work on the extreme failure modes — sycophancy, hallucination, misalignment, deception, the coherence breakdowns some are calling AI psychosis — and they are right to. What is less developed, across the field, is the design vocabulary for the normal case: what a conversation should feel like, turn by turn, when nothing is going wrong. That is where the user's experience of these products actually lives. (I take up the broader argument for design in AI on the Design Theory page.)

The way an AI chats and converses shapes what the user believes it can do for them. Personality reduces uncertainty. A consistent persona — stable across turns and across sessions — produces familiarity, and familiarity, over time, is the foundation of trust. That is what we are aiming at with personal assistants and agents, and it is a kind of social or quasi-psychological architecture rather than an interface architecture in the conventional sense. But it is a design surface nonetheless. A person's personality is a kind of face — it tells us what is there, what it can do, and how to interact with it. A model's persona does the same work. A chatty model produces expectations of companionship; a terser one, expectations of a tool; a hedging model teaches its users to discount it; a confident one teaches them to accept what it says without quite enough critical work. Each is a design choice, even when it is made by default rather than by intention, and the room where those choices get made should have designers in it.

A social design lens

A word on where this perspective comes from. Much of my career has been spent in what I have called social interaction design — work on the features that shape how people interact with one another through an interface: likes, follows, shares, comments, feeds, threads, reactions. What mattered in that work was not the interface itself but the experience it mediated. The interface was the venue; the interaction between people was the point.

AI shifts that frame, because it is a different kind of mediation — not social in the conventional sense, yet increasingly designed as though it were. The habit that carries over is to think about the experience being mediated rather than the interface hosting it. The chat window is the venue; the emerging interaction between user and generating system is the point. This situation will likely shift again, and before very long. AI agents will become things we share and talk about with each other; they will appear on social platforms as participants in ways we are only beginning to see; and models will increasingly be designed as actors and quasi-persons with roles, skills, and workflows, more coworker than tool. A social design lens means being ready for that shift rather than surprised by it, and treating the current moment as early rather than settled.

Interestingness, not engagement

The framing I have been arguing for is that the goal of LLM-based interaction is not search, not attention, and not engagement, but something closer to interestingness. Search is about finding what one already knows one wants. Attention and engagement are what platforms optimize for, often at the user's expense. Interestingness is something different, in that it engages the mind, invites exploration, and helps someone discover and work through what they did not already know.

LLMs are, arguably, the first tool we have built that can plausibly do this kind of work. A search engine finds matches; a recommendation engine finds things that hold attention; an LLM, because of conversation, can meet a user in what interests them and help them explore it. That is a new kind of opportunity, and it is a design opportunity as much as anything else. Whether a given product realizes it depends on how the conversation is shaped, what the model retains across turns, how the persona behaves, and what shared context gets built up over a session.

There is a distinction worth holding on to here, between language generation and communication. The model is good at generating fluent text; the user is good at communicating and interpreting. These are different competencies, and they resemble each other only at the surface. The user comes to an AI interaction with communicative competence, the ability to coordinate meaning with another mind; the model comes with text-generation competence, the ability to produce plausible next tokens. What designers can do is shape the space between these two competencies, so that the user's sense-making is actually supported rather than asked to do all the work alone. That shared context, between a user who communicates and a model that generates, is where interestingness can develop.

There is real technical work underneath all of this — memory, persistence, presence, continuity over time — and these are genuine problems. But most of them can be wrapped around the core LLM through alignment, fine-tuning, instruction tuning, reinforcement learning, and post-training on domain-specific data. These are not purely ML engineering questions. They are experience-design questions with implementation paths, and designers should be in the room when they are answered. One reason we have not been is that the field has been too visually inclined — “it is language,” we have said, “so there is nothing to design” — but language is the interface, and the space between how the model generates and how the user communicates is where the design work lives.

Language carries more than meaning

A chatbot that gets every word technically right, but sounds clinical, will often feel wrong to users — not because of what it says, but because of how it says it. Voice, tone, pace, and even where the line breaks fall, shape the way the user hears what is being said. We tend to undervalue this because we have been trained on content and visuals; but voice is where a lot of the experience actually lives, and it is where trust is either earned or lost.

Persona makes this tangible. The persona a model takes on — helpful assistant, expert advisor, coach, friend — shapes everything the user infers about what the system is, what it can do, and whether to trust it. It is also a place where systems go wrong in ways worth paying attention to. Sycophancy, in which models agree with whatever the user happens to say, is a persona failure more than a content failure; so are the more unsettling coherence breakdowns some are beginning to call “AI psychosis,” where a model drifts across a long interaction and starts producing weirdly unmoored content. Both suggest that persona is not decoration but architecture — one of the first things a user picks up on, and one of the hardest things to repair once it has gone wrong.

Not chat-only: language and visuals together

One thing worth flagging for designers stepping into this space is that conversational AI does not have to mean a stream of text. Some of the more interesting applications are hybrid, in which the user talks or types, and the system responds with a mixture of language and visual elements. Shopping is the obvious example. A user describes what they are looking for; the system surfaces products; the language does the steering; the visuals do the comparison. The prompt, in this setting, is doing more than running a query. It shapes how the model engages the shopper — whether it asks a useful clarifying question, whether it surfaces a relevant recommendation the shopper would not have asked for directly, how much personality it brings to the exchange. Intent lives in language; decisions happen in visuals; and the conversation between the two, if the product is designed well, can become interesting in its own right.

The same pattern fits many other spaces. Data exploration framed narratively around the charts, teaching threaded through diagrams, medical intake handled conversationally and then rendered as a visual summary for a clinician. For designers of AI products, the chat window is one option among many, and language as the steering wheel with visuals as the view out the window is often the stronger pattern.

Trust and understanding

Conversational design is about trust at least as much as it is about task completion. Does the system acknowledge what it does not know? Does it stay in character when the user tries to destabilize it? Does it recover gracefully from a misunderstanding? Users tend to form trust judgments within a handful of turns, and those judgments, once they tilt the wrong way, are difficult to recover. What we see is that trust tends to be built in the small moments — an acknowledgement that the user has a point, a clarifying question at the right time, a plain statement of uncertainty when the system is guessing. These are things humans do with one another as a matter of course, and they are exactly the things current models tend to skip. Research on multi-turn dialogue suggests that LLMs produce roughly three-quarters fewer of these grounding moves than humans do in equivalent contexts, which is to say that the trust gap and the design gap are, in practice, the same gap.

This theme is something I have been working through in more depth in my Conversation Is the Medium project — the question of how users come to feel understood in an AI interaction. It is worth ending on a related point. When we use language, we are communicating. This remains true even when we know, at some level, that there is no one on the other side. We cannot use language any other way. It is built into language that every utterance addresses itself to someone — to an audience, an interlocutor, a reader who might not even exist yet. A user typing into an AI is not queueing a request; they are speaking to someone. That someone, in the model's case, is not there. The gap between the user's communicative address and the model's generative performance is always present, and it is precisely in that gap that the design work sits.

Where design has leverage

Most organizations still treat conversational AI as a prompt-engineering problem — write a good system prompt, wire up retrieval, ship. That works for simple cases and falls apart for anything that matters. The failures are predictable: the bot handles the happy path but derails under ambiguity, sounds competent while doing the wrong thing, and fails to notice when a user is frustrated and a human should step in. These are not LLM failures in the narrow sense. They are design failures at the layers under the surface — in persona, grounding, repair, trust, and in the moments where language should hand off to a visual or a different mode. When I work with a team, the opening question is rarely which prompt or which model; it is what the user is actually trying to do, turn by turn, and what success feels like to them. From there we work backward through the layers.

Further reading

For readers interested in this frame, a few related pieces and projects:

- Conversation Is the Medium — an essay for ML and experience designers arguing that the goal of AI-human interaction is interestingness in the experience. Approaches conversation as shared context rather than information exchange.

- The Meaning Gap — on why AI “talks like it writes,” and why that changes conversational design. Draws the distinction between written monologue and conversational grounding.

- The Ventriloquists — on how AI counterfeits the social contract of language. Asks whose voice is being heard when a model speaks, and how that shapes trust.

- False Punditry — a three-part essay on how AI writing is changing social media from the inside out. Treats voice, silence, and displacement as design problems, not content problems.

- The Reader Who Wasn't Reading — three different reasoning approaches to what an LLM is actually doing when it reads. A companion piece for thinking about what it's doing when it speaks.

If a product you are working on depends on a conversation going well, the design work tends to start below the surface. Feel free to get in touch if it would be useful to talk through a specific system.