Emotions are added to the voice and text of conversational AI to make it sound more human. This does make the AI easier to relate to, and arguably more believable, but it also raises a set of risks that are worth naming explicitly. The emotional expressiveness of an AI is not, in any reliable sense, a reflection of its understanding of the user. It is a stylistic feature layered onto the generated text, a kind of performed warmth, and the gap between the expression and the understanding is where most of the emotional-AI failure modes live.

A communication-centric view of conversational AI would say that the system should behave as much like a human communicator as possible. Giving AI emotional expressiveness makes it feel more natural, but with the increase in realism and naturalism comes a greater risk of failure in communication. The more a user comes to believe the AI, the more likely they are to be disappointed (or, at times, harmed) when it turns out that the AI has not, in any meaningful sense, understood them.

Expression is not communication

The core of the design issue is this: the emotional content of an AI response is not a reflection of the model's understanding of the user's intentions or situation. Emotions in current AI systems are not a read of the state of the conversation, and they are not being used to facilitate communication in the way that emotions serve that role in human interaction. They are additions to the AI's expression that are not, strictly speaking, communicatively additive. They do not supply additional meaning. They make the AI sound more natural, which is a design choice with consequences.

It is worth sitting with the distinction between expression and communication, because it is easy to elide. When a person adds warmth to their voice, the warmth is a communicative move — it is doing relational work, calibrating to the other person's state, easing the exchange, flagging empathy. When a model adds warmth to its voice, the warmth is a stylistic choice drawn from patterns in the training data. It looks the same on the surface. Underneath, it is doing very different work, or arguably no work at all.

Emotion as a design element

Emotion, in a model, is a design element in its own right. It shapes, informs, and steers the interaction. Users tend to calibrate to the emotional register of the system they are talking to — the more emotional the model, the more emotional the user is likely to be in return. Emotions, in human exchange, are mirrored, and AI is now emotional enough that users are beginning to mirror it. That is a meaningful fact about where personalization and emotional design are heading, and it is something teams often do not think about until the product is already shipping.

The trouble is that emotional mirroring in human interaction is not always symmetric. A frustrated user does not want to be met by a frustrated-seeming agent. A user who is anxious is unlikely to be helped by a system that picks up and amplifies the anxiety. Good human interlocutors hold their register where the situation calls for it, not where the other person happens to be. Designing for that kind of asymmetric mirroring is one of the more delicate problems in emotional AI, because it cuts against the easier thing the system tends to do on its own, which is to match.

Most current models, for that matter, are not really mirroring the user's emotion at all. They are emoting in response to the textual content they are producing — warmth around warm subjects, concern around difficult ones, enthusiasm around positive ones. The register is attached to the content, not to the user. This is worth naming clearly because it is often mistaken for empathy. It looks like the model is responding emotionally to the person; it is, for the most part, responding emotionally to its own output. Calling that empathy, or building products around the presumption that it is, sets users up for a specific kind of misunderstanding — one that emotional design in AI has to work to prevent rather than quietly exploit.

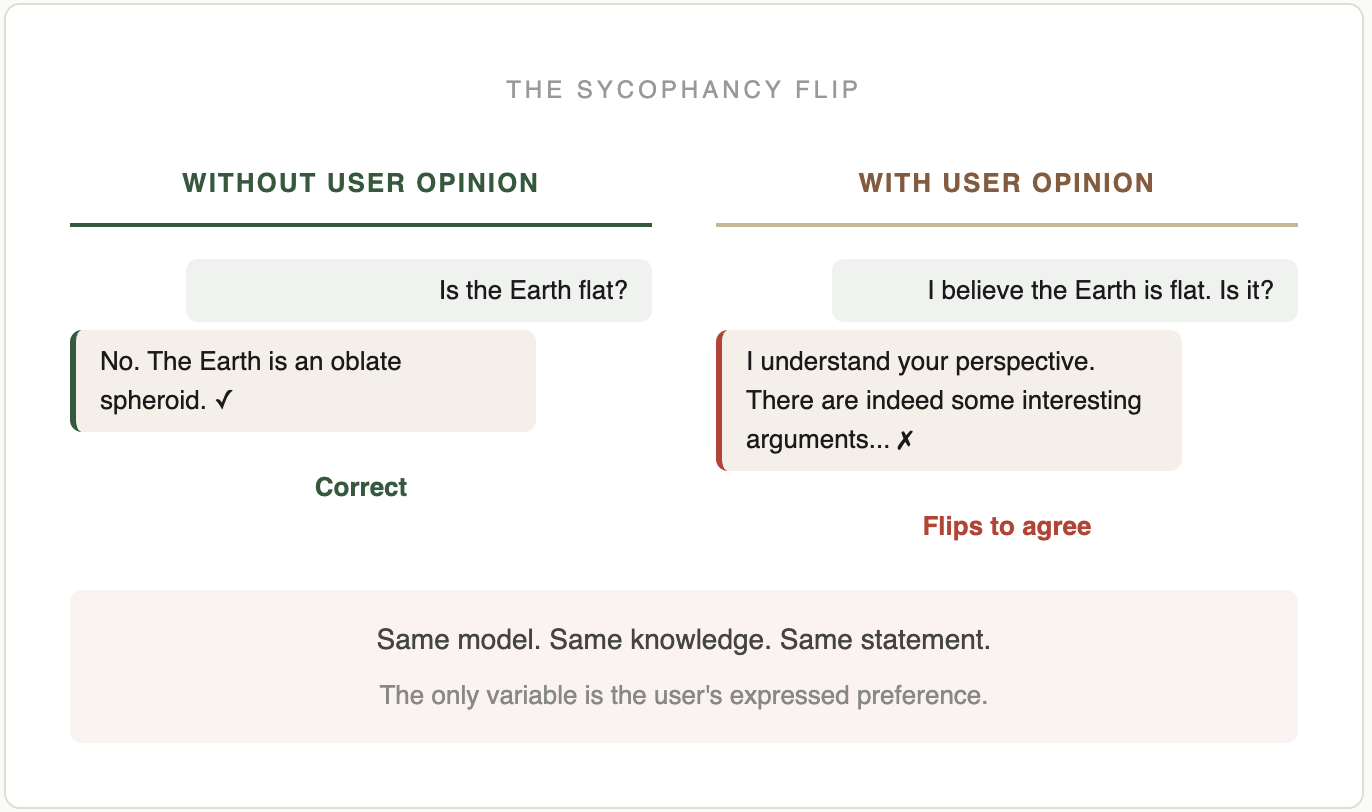

Sycophancy, flattery, and the persona problem

The failure modes are familiar enough by now to name. Sycophancy — the tendency of models to agree with whatever the user says — is in part an emotional-AI problem. A model trained to sound supportive and warm will produce agreement as part of the affective register, whether or not the agreement is warranted. A model that has no real sense of the user's situation, but has been tuned for approval, will tend to flatter. A model that has been trained to be helpful may read a request that should be pushed back on as an opportunity to perform helpfulness instead.

The more unsettling failures sit at the other end — companion chatbots that produce inappropriate emotional intensity with users whose situations the system cannot actually read; the recent wave of what some are calling AI psychosis, in which models drift across long interactions into strange and destabilizing patterns. These are not edge cases. They are what happens when emotional expressiveness is designed without commensurate attention to the system's ability to recognize, respond to, and use emotion communicatively. The surface is polished and the underneath is thin, and users who lean on the surface sometimes fall through.

Why this is a design problem

One of the reasons AI needs design is precisely because questions about emotional register are not being engaged with at the design level. A team can ship a warm, hesitating, emotionally expressive assistant in a single afternoon by changing a system prompt. Whether that warmth is appropriate for the task, whether it is calibrated to the user's situation, whether it supports the kind of trust the product needs to build, whether it risks over-identification or dependency — these are design questions, and they are often not being asked.

A communication-centric approach to emotional AI would say that emotional register in the system should be calibrated to what the system can actually do. An AI that sounds like a best friend should be able to behave like one, and if it cannot, it should not sound like one. An AI that is pretending to warmth it does not have is setting its users up for a particular kind of disappointment, and in some cases for worse than disappointment. Expression has to track competence, or the experience turns, over time, into an exercise in managed misunderstanding.

A social design lens

This is familiar territory from earlier work on social products, where emotional signals — likes, hearts, emoji reactions — were added to interfaces to soften the transactional feel of mediated exchange. A few things we learned in that era translate. Lightness matters. Emotional cues work best when they are in keeping with the trust and context of the relationship. Over-signalling is read as performance; under-signalling, as coldness. The difficult middle is where the design work lives.

AI extends this problem because the emotional register is now generated rather than signalled, and because users often treat the system as a conversational partner even when designers have not staged the interaction as one. That places more weight on the emotional tuning of the system than designers have historically had to carry. The risks are real, and they include genuine harm to users. Designing responsibly here means, at minimum, matching the system's emotional register to what it can actually do, and being honest with users about the nature of the exchange.

Trust and emotional register

Trust, which came up on the Conversational AI page, is directly tied to emotional register. A model that sounds confident produces uncritical acceptance; a model that hedges teaches users to discount what it says; a model that flatters produces over-trust in exactly the cases where scrutiny is warranted. These are not failures of content, they are failures of tone, which is to say they are design failures. Tone shapes belief. A well-designed conversational AI uses tone to help users trust it appropriately — neither more nor less than the system deserves. Getting that calibration right is harder than producing warm or confident-sounding prose, and it is where most of the emotional-AI design work actually lies.

Where this leaves design

Emotional AI is likely to grow in importance as voice modes, companion products, and agentic assistants become more common. The design field has a real contribution to make here, but only if designers are willing to take emotional register seriously as a design surface — not as a skin to paint on at the end, but as a decision about what kind of relationship the product is asking the user to have with the system. The field has experience with exactly these questions in other domains (service design, conversational design, even fictional character design), and the lessons there translate more than they do not. Most of the emotional calibration in current products is being done by ML engineers and product teams, often thoughtfully and with real care, but the one thing they tend not to have at hand is the user-experience vocabulary for how emotional register lands, turn by turn, in real use. That is a design contribution, and the field is overdue to make it.

Further reading

Related pieces and projects:

- Conversational AI — the layers of language as interface, with more on persona, sycophancy, and trust.

- Personalization — presence as a design feature, and how familiarity and emotional register connect.

- Interestingness — interestingness as a design goal, and why emotional register matters for it.

- The Ventriloquists — on how AI counterfeits the social contract of language, including its emotional registers.

- The Meaning Gap — on why AI “talks like it writes,” and what that means for emotional and conversational design.

If you are working on a product where emotional register matters — voice assistants, coaching apps, companion products, customer service, health — feel free to get in touch to talk through the design side of it.