Interestingness is, in my view, what designing for conversation is ultimately about. It is also the most difficult challenge in conversational interaction design, because it lives in what designers have always found hardest: open dialog. Task-oriented interactions are comparatively legible — the user wants a flight booked, a ticket resolved, a question answered. But most of what makes a conversation feel worth having is not legible in that way. The user does not come in with a clean specification of what they want, what their interest actually is, or what a successful exchange would feel like. They may not even know themselves. Designing for that kind of open, under-specified exchange is what interestingness is asking us to do.

This is part of why LLMs have so often been framed as search engines. Search is what users are familiar with, and search is a well-understood design problem with a clean input and a clean output. Models can behave that way, and a lot of current design treats them as if they should. But LLMs are not really search engines, and their way of “learning” everything does not make them capable of regurgitating it on demand. Treating them as such, and designing for them as such, leaves most of what they are actually good for on the table — and the part being left behind is the part where interestingness would live.

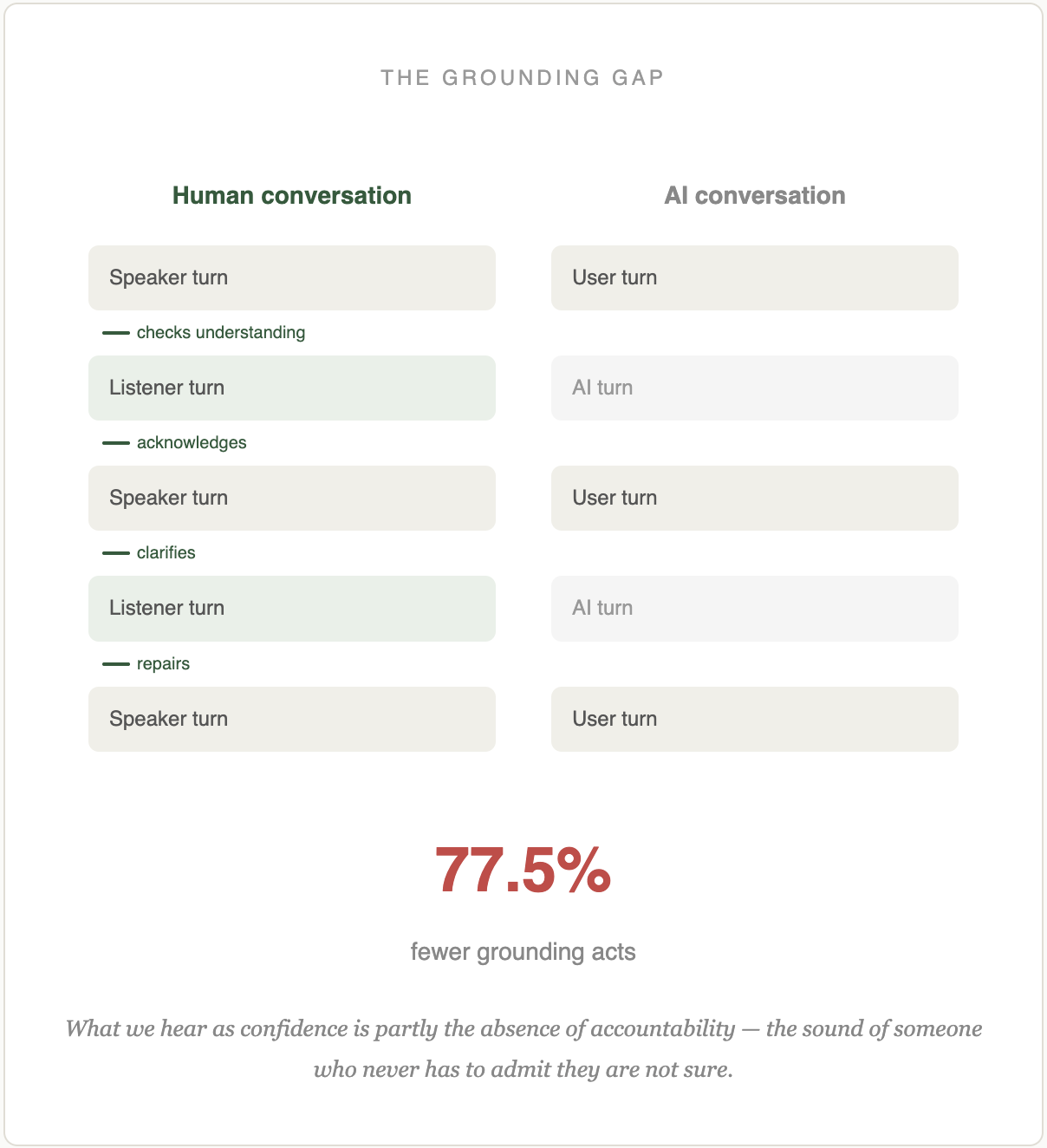

Most of what has been built and researched so far follows what we might call the monological paradigm of prompt-and-response. The model answers on the assumption that the user's intent is already fully present in what they typed. The AI does not engage with the user about the interest behind the prompt, because there is no space in the design for that interest to be articulated in the first place. The user is presumed to already know what they want — which, for most of the cases that would be interesting, is exactly the assumption that is wrong.

The historical arc

To see why this matters, it is useful to set AI alongside the design eras that preceded it. Four beats tell the story.

The web captured eyeballs. In the first era of the commercial internet, the operative unit was the eye on the page. The guiding metaphor was the book: sites had pages, readers had bookmarks, time on site meant time on the page. Design vocabulary grew up around the reader — stickiness, information architecture, navigation, pageviews. The business model was advertising sold against impressions.

Search tried to match output to input. Running through that page-based web was the effort to match what you were looking for to what was already on the shelf. The operative metric was relevance — Google's PageRank (a giveaway of the era's metaphor; it literally ranks pages), Amazon's collaborative filtering, Netflix's recommendation engine. All were engineering answers to the same design question: given what you typed or clicked, which of the items we have is most worth showing you?

Social media captured attention. The unit shifted from the eye on the page to the attentional act. Engagement metrics measured attention at finer granularity: dwell time, scroll depth, likes, shares, return rates. The feed, the variable reward, the notification. Web 2.0 was social and attentional rather than bookish and navigational, and advertising was now sold against attention rather than merely against impressions.

AI can engage the mind. The unit shifts again. It is no longer the eye, the ranked item, or the attentional moment. It is the active thinking a user is doing — the question being formed, the interest being pursued, the half-thought still looking for words. What AI can do that no earlier medium could is engage with what you are thinking, turn by turn, in a way that moves you further along.

Relevance and interestingness

A search engine returns a list ranked by relevance. A recommender returns items ranked by the likelihood of a click. Both are relevance machines: given an input, what in the existing collection matches best? This is a real achievement, and the distinction I want to draw is not against it. It is an upgrade of it. The move from relevance to interestingness is from static to temporal, from extractive to generative, from picking out of an index to pursuing a direction. Relevance returns one of the items we already have. Interestingness makes topical moves in a conversation that is still being built.

Consider the familiar case of a recommender asked what movie to watch tonight. It predicts the next item one is likely to click on, and it will often be good at that. But notice what it is not doing. It is not asking why you are looking. It is not noticing that the last four things you watched were all from the late nineties and that there might be something in that. It is not attending to the journey. Relevance picks the next step on a path it already assumes you are committed to. Interestingness is the capacity to notice the path itself.

Types of interest

Abstract claims about interestingness will not survive long without something concrete. It helps to notice that interest itself has types, each of which calls for a different conversational shape. A few familiar ones.

There is the interest in the one — a single best answer, letting someone else's judgment define “best.” There is the best for me — not the objectively best, but the thing that fits my situation, my style, my constraints. There is the I don't know what I want case, an anomalous state of knowledge in which the user knows their knowledge is incomplete but cannot specify what is missing. There is I want to know more, an established interest looking to go deeper. And there is I want to anticipate, the attempt to see around a corner and forecast what is coming. Each type of interest calls for a different conversational shape, and a design discipline organized around interestingness has to begin with the question of which kind of interest is in the room this time.

Designing the conditions for interestingness

We do not yet know how to design for interestingness, and part of the reason is that interestingness is not a thing a designer can directly control. It is a quality of an exchange rather than a property of any single turn, and it depends on the user as much as on the system. What a designer can do is produce the conditions under which interestingness becomes more likely. Those conditions, fortunately, map onto capabilities a model can be given or developed toward: maintaining topical focus without drifting, carrying a multi-turn interaction coherently, pivoting when the pivot is what interest wants, drawing on memory selectively and well, asking a clarifying question at the right moment, anticipating what might come next and, where appropriate, acting proactively rather than only reactively. Each of these is a capability we can evaluate, tune, and design around. Interestingness, on this view, is not something we design directly. It is something we design the conditions for.

Topicality is one of the most important of those conditions, and it is the one current AI has the most trouble with. Users drift, arriving with a half-formed question, finding that each partial answer exposes adjacent gaps, and getting pulled sideways as they go. A topical collaborator would catch this and say, wait, are we still on the thing you came in with, or are we somewhere else now? That is a small conversational move, and models, left to themselves, almost never make it. Research in conversational geometry and dialogue topic management suggests that how a conversation moves through topic space predicts its success almost as well as what is said in it. Topicality is a design surface in its own right — arguably the design surface where interestingness most directly lives.

Why this is a design goal, not a training goal

Interestingness is not something you get by making the model smarter. It is something you get by designing the exchange. Memory, retrieval, persona, turn-taking, grounding, repair — all the things we have been discussing on the Conversational AI and Personalization pages — are the levers that make interestingness possible or make it impossible. Models that happen to be interesting in one product and dull in another are not different models; they are differently designed. This is one of the reasons AI needs design. Engineering can deliver the capability, but design is what decides whether the capability ever meets the user in a useful way.

The pathology to come

Each of the earlier eras produced its own pathology. Interestingness will, too, and we should try to name its shape before it settles in. The risk worth watching for is a form of intellectual complacency — the sense that one has thought through a topic because the AI played back a version of one's thinking in flatteringly coherent prose. An AI that is interesting in the wrong way can leave its user with the feeling of having gone somewhere without having gone anywhere. How to design against this is a question the field will need to take up, but it will be easier to work on if we name the target clearly in the first place.

Conversation Is the Medium

I have been developing this argument at greater length in my Conversation Is the Medium project, which treats interestingness as the design goal proper to AI and tries to work out what that means across the eight categories of user-AI interaction (the user, agency, context, use cases, interaction, language, content, and the AI itself). The short version is on this page. The longer version takes the argument into each of those categories and asks what a design practice organized around interestingness actually has to do differently.

Further reading

Related pieces:

- Conversational AI — the four layers of language as interface, and where design has leverage below the surface.

- Personalization — presence as a design feature, and how familiarity becomes a lever for interestingness.

- Design Theory — how design theory will need to update to accommodate AI, including the move from utility to interestingness.

- The Reader Who Wasn't Reading — three reasoning approaches to the same question about what an LLM is actually doing when it reads.

If you are working on an AI product and would like to talk through what designing for interestingness could look like in your context, feel free to get in touch.