Most of the other pages on this site approach AI through the lens of language as interface — how people talk with models, what shapes a conversation, and where design has leverage in the exchange itself. Organizational design asks something different. When AI is being woven into the fabric of an organization, into roles, jobs, decisions, workflows, and the software that connects them all, the design challenge shifts. Language is still an interface at many of the touch points, but the bulk of the design work is structural. It is about where AI sits, what it is permitted to do, how it coordinates with humans and with other systems, and who is on the hook when something goes wrong.

It has been noted, somewhat ironically, that AI threatens not blue-collar work so much as white-collar, knowledge work. Generative AI is now capable of broad and deep expertise, unreliably so, though the unreliability is steadily being chipped away by better training, alignment, reinforcement learning, and domain-specific tuning. A great deal of work that used to be done by hand, often by teams of junior and senior staff, is now being done, or being attempted, by AI. Teams are being downsized or reconfigured in response, and the people who remain are under increasing pressure to work effectively alongside these deployments. That pressure is itself a design problem, and one that the experience design of AI tools tends to overlook.

Agents and agentic AI

The frontier of this work is what is now being called agentic AI, and the distinction is worth pausing on. An assistant answers your question or drafts your email, but you are still driving. An agent takes initiative within a scope you have defined — it uses tools, calls APIs, reads and writes to databases, hands work off to other agents, and reports back on what it has done. As agents become more capable, the design questions multiply. What actions can an agent take on its own? What should require approval? How do we know what it did? What happens when it is wrong? The language interface is still there, but it is no longer where most of the experience lives.

Human-in-the-loop, done well

Human-in-the-loop is often invoked as if it were a checkbox — add an approval step somewhere in the workflow and the system is safe. In practice, workable HITL is serious design work. Where does the human enter? What do they see when they do? What authority do they actually have? What happens when they approve too quickly, because they are overloaded and the system almost always gets it right? These are questions about cognitive load, attention, authority, and trust, and they are design questions in the classic sense. A poorly designed HITL turns into rubber-stamping. A well-designed one integrates review into the rhythm of the work without grinding it to a halt, and makes it easy for the human to tell, at a glance, where something looks off.

Who is responsible?

One of the more uncomfortable questions for organizations integrating AI is the question of responsibility. When an agent acts and the action has consequences, who is accountable? The AI vendor, the team that deployed the agent, the employee who approved an output, or the one who configured the agent's scope? The answers are legal and organizational as much as they are design questions, but design shapes what the answers can be. A well-designed system makes it legible who did what, when, and why. A poorly designed one distributes responsibility in ways that make it almost impossible to pin down — which tends to fall, in the end, on whoever was closest to the incident, usually the employee with the least authority to have prevented it.

Where design has leverage

There is real work here for researchers and designers, including ones coming from the customer-facing UX tradition. Stakeholder interviews, workshops, organizational readiness assessments, service-design blueprints, role and responsibility mapping, workflow discovery — most of the research methods that have long been used to socialize new technology inside organizations translate directly to AI integration. The discovery questions are familiar: which roles should AI supplement, which tasks can be automated without harm, which conversations can it contribute to, which question-answer jobs can it handle without hallucinating badly, which departments have the data and the practices to support a specialized deployment. These are questions design teams already know how to ask.

Where design tends to have the most distinctive leverage in organizational AI, though, is in the scaffolding, harnessing, and integration around the model. An AI agent does not work well on its own. It works well inside a system of permissions, tools, data connections, notification patterns, and review workflows. The design of that system is where agents earn trust, or lose it. Language is still one of the surfaces (every agent exposes itself through language at some point) but the bulk of the design surface is organizational: roles and authorities, tasks and dependencies, audit trails, exception handling, and the small software affordances that let a human-agent system actually run.

Relevant experience

I bring to this work a long track record of enterprise consulting. Five and a half years at Deloitte Digital (2012–2017), with large clients such as Janssen, Cisco, Wells Fargo, Humana, Intel, and Chevron, involved exactly the kind of stakeholder interviewing, methodology design, documentation, and cross-team coordination that AI integration now calls for. The independent consulting before and since has spanned startups and multinationals in similar veins. Much of what I learned about how new technology actually gets absorbed into the work of large organizations, and about the kinds of friction that derail even well-designed implementations, applies directly to the AI integration work happening now. The Work page has a fuller engagement history.

Where this is heading

The future of agentic AI in organizations looks both genuinely interesting and genuinely challenging. As models become more capable of semi-autonomous action — making bounded decisions, orchestrating workflows, coordinating with other agents — the design work around them only becomes more consequential. What gets designed well will produce systems that extend what a smaller human team can do. What gets designed badly will produce systems that automate confusion, accelerate errors, and put the wrong people on the hook for outcomes they could not have controlled. I am interested in working on the first kind. Feel free to get in touch if you have a specific deployment or integration in mind.

Further reading

Related pieces and projects:

- Domain-Specific AI — on building AI systems that speak a specific domain well, which is often the first step before agentic integration.

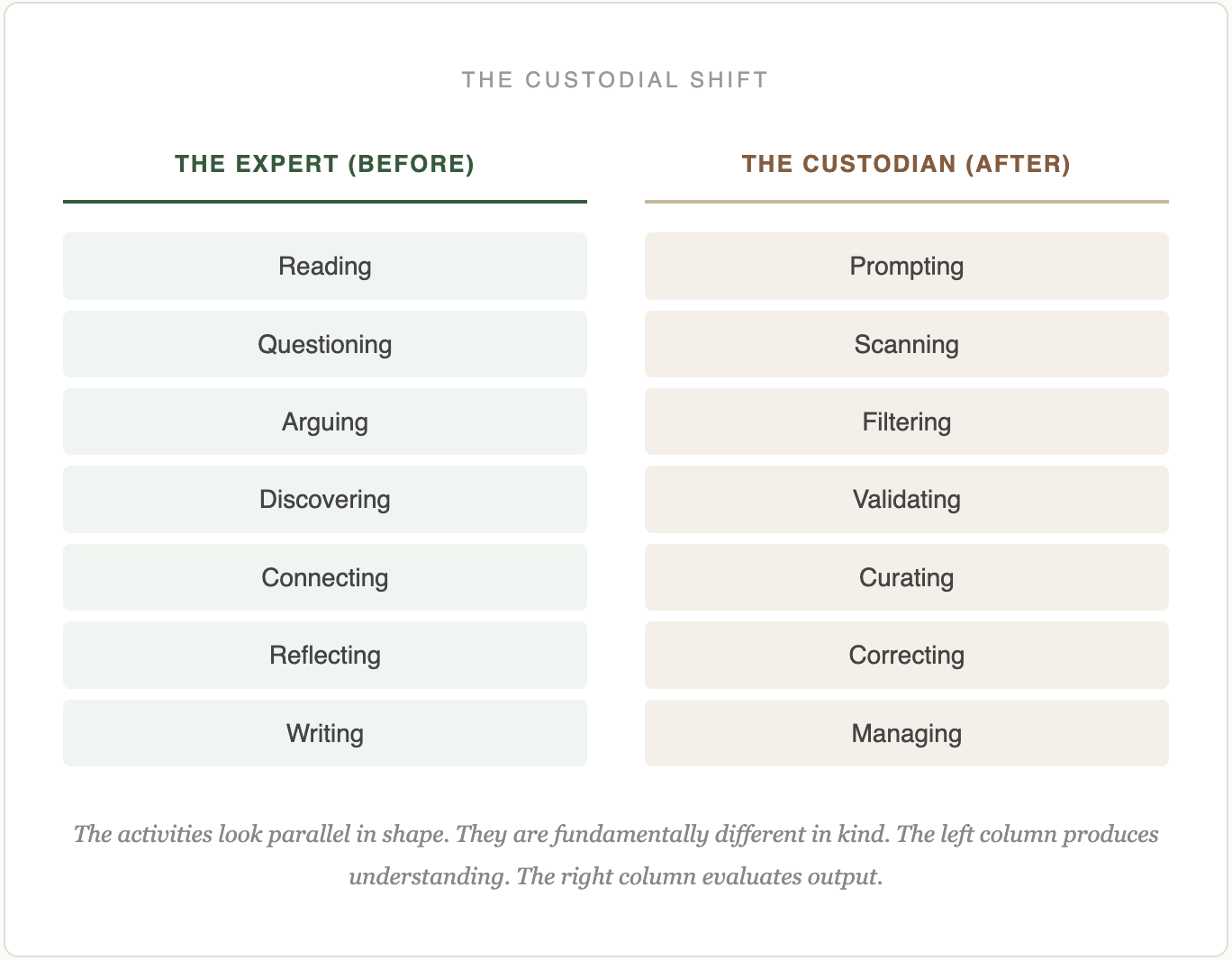

- Knowledge Custodians — on what expertise becomes as AI absorbs more of its routine surface.

- Conversational AI — where language remains the interface, and where persona and trust still do much of the work.

- Design Theory — the broader case for design as a discipline AI cannot do without.