Domain-specific applications are another example of language as the interface — though here the interface works less as a communication medium, in the conversational sense, and more as navigation, information, content, and instruction. The user is searching, specifying, finding, issuing requests. The system is retrieving, answering, following directions. When a domain is specific, it has its own terminology and its own way to speak, and the user working inside it does not need warmth from the model so much as accuracy. The relationship is less personal and more professional. The user has to be able to assume that the model knows the domain well enough to be factually right, to follow instructions correctly, and not to hallucinate. In healthcare, law, finance, or any domain where the output actually matters, reliability is the job.

The promise is familiar. A law firm wants an AI that can answer questions across its case archive. A manufacturer wants a technician's assistant that knows the equipment. A hospital wants a triage and documentation aid that speaks clinical language correctly. A customer-service team wants a chat system that actually knows the products and refund policies rather than improvising. In each case the underlying language model is essentially the same; what differs is the layer of customization between the base model and the user, and how well that layer reflects what the domain actually requires.

Base model versus domain system

There is a real tension at the heart of this work. The foundation models that perform best in conversation are also the most generic, and aggressive customization can, in many cases, degrade what they are best at. Heavy fine-tuning narrows the model; aggressive retrieval scaffolding can make it read as stiff or defensive; knowledge graphs, though useful for grounding, integrate with generative models in ways still being worked out. None of these methods is a free lunch. What design brings, and what is often missing when engineering teams work on domain-specific AI in isolation, is a clear sense of what the user is actually trying to do — which questions really get asked, which errors really hurt, which phrasings really show up in practice, and which edges of the domain the system will keep running into. Without that, customization becomes an inward-looking exercise in aligning the model to documents rather than to use.

What design brings to the stack

Domain specialization happens across several layers, each of which involves technical choices as well as design ones. The diagram below sketches a typical stack, with the kinds of design contributions that belong at each layer.

What designers can capture that engineers often miss

Designers and user researchers can help capture much of what engineering teams need in order to tune a model well but often do not have at hand. The language, terminology, and taxonomy in use inside a domain; the tasks, flows, and actions that actually come up in the work; the roles people occupy and the rules, written and unwritten, that shape how they interact; the exceptions, edge cases, and judgment calls that distinguish the expert from the competent novice. Some of this is suited to being codified in a knowledge graph or structured ontology; other parts work better as evaluation sets, failure-mode libraries, or benchmarks; others still are best shaped through reasoning chains and careful system prompts. Overfitting is a real risk with fine-tuning on small corpora, and RAG can return adjacent-but-wrong content. None of the methods is a free lunch, and the right mix depends on the domain.

Expert systems and the shape of knowledge work

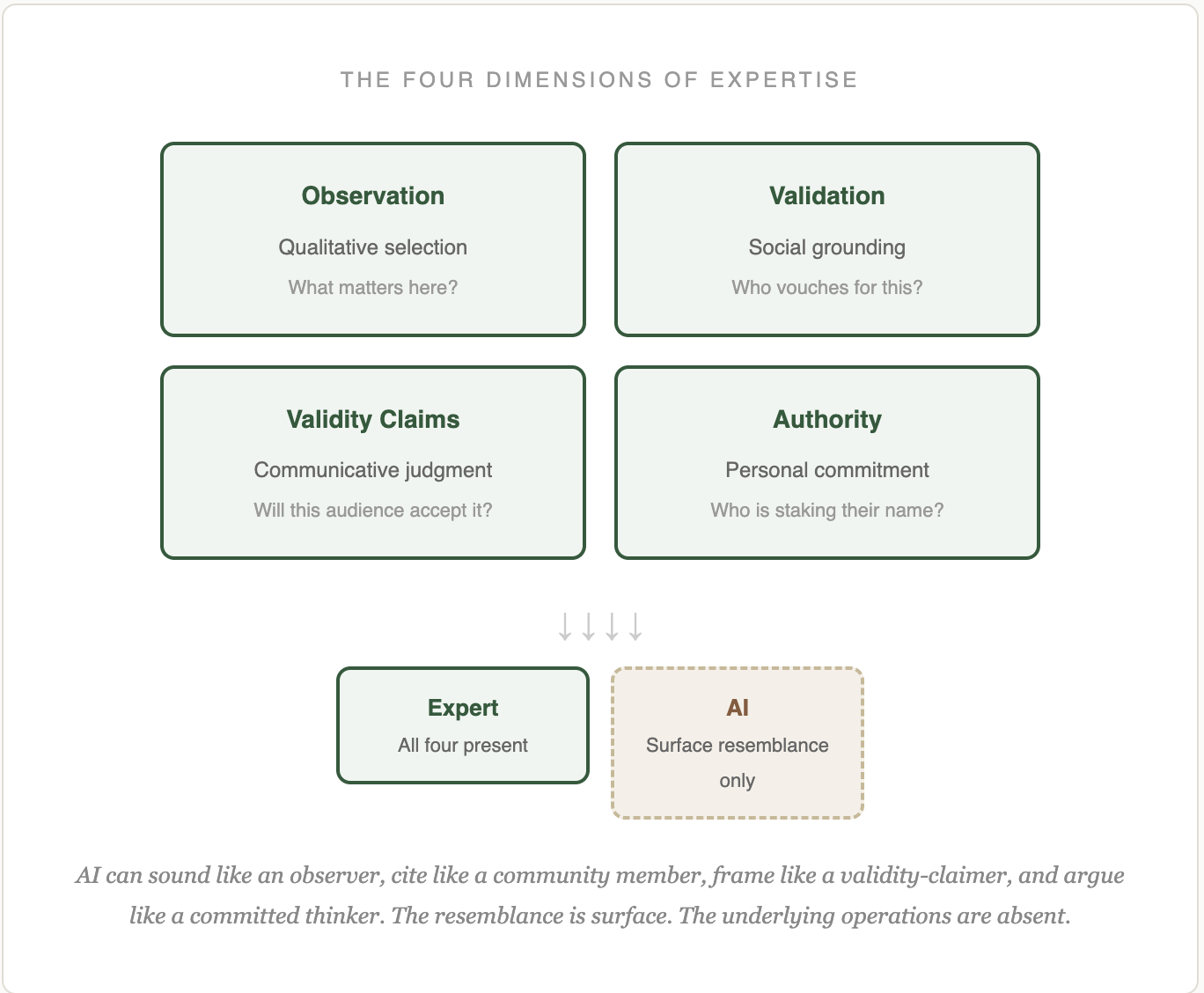

Regardless of which technical path an organization takes, expert systems built on generative AI — for search, knowledge management, question-answer, recommendation, customer service, triage, assistants, and a long list of related uses — look inevitable for the kinds of work where an organization's value lives in its accumulated expertise and documentation. These systems have implications not only for customer-facing experience but for organizational structure. Knowledge work itself will be reshaped by what AI can now do across a large number of domains, and the role and status of domain experts will change as more of their work becomes machine-assisted. I have been working through some of this in a separate project I call Knowledge Custodians, which asks what expertise becomes when AI takes on more of its routine surface.

A social design lens

There is a thread here from earlier work on social products. Domain-specific AI is, in many cases, mediating social interactions that used to be handled by humans: the service interaction, the expert consultation, the helpdesk exchange. These are not merely technical interactions; they have tone, pacing, deference, trust, and a host of unwritten social rules that vary by domain. A design lens that takes these seriously tends to produce products that feel respectful of the user and the expert whose work the system is standing in for. A purely technical approach tends to produce products that work but do not earn trust — and in domains like health, finance, or legal, trust is not optional.

What I can help with

This is, in practice, one of the places where my work tends to be most useful to clients. Translating what an organization knows — documentation, procedures, expertise, institutional rules, the subtleties of how the work actually happens — into the materials a team needs in order to build, tune, and evaluate a domain-specific AI system. That includes capturing terminology and taxonomy, mapping task flows and decision points, drafting evaluation criteria and failure-mode libraries, designing conversation and escalation patterns, and thinking through where the system should and should not try to substitute for human judgment.

I work closely with engineering and product teams, and with subject-matter experts inside the organization. The deliverables from my side tend to be the things that make the downstream technical work sharper: a documented content architecture, a set of evaluation scenarios grounded in real user situations, a conversation-design specification, and a shared understanding of what the product should and should not try to be. If you are thinking about building a domain-specific AI system and would like to talk through the design side of the work, feel free to get in touch.

Further reading

Related pieces and projects:

- Knowledge Custodians — on what expertise becomes as AI absorbs more of its routine surface. The strategic backdrop for any domain-specialization project.

- Conversational AI — the layers of language as interface, with more on persona, grounding, and trust.

- Prompts — how prompts become design artifacts, with the anatomy of a production prompt.

- Modular Reasoning — the playbook of reasoning modules, useful for orchestrating research-heavy and analysis-heavy domain work.

- Design Theory — the larger case for design as a discipline AI cannot do without.