Large language models, left to themselves, give users a fairly generic experience. Responses may be specific to the prompt at hand, but they come from a one-size-fits-all base model that does not know who it is speaking with, what that user cares about, or what was said the last time they talked. The promise of personalized AI, and of AI assistants in particular, is to shift that relationship, so that the system, in some meaningful sense, comes to know the user.

It is worth pausing on what the promise actually entails, because “personalized” is doing a lot of work in the phrase. What users want, if we take the wish seriously, is not simply a system that tracks their behavior and produces better guesses. It is something closer to what we would call presence in another person — the felt quality of being with someone who is there, who knows us at least a little, and whose attention is available when we want it without demanding it when we do not.

Presence as a design feature

Presence is one of the design features AI assistants will, over time, increasingly aim for. In human social interaction, co-presence is characterized by availability, which is shown in turn through interest and attention. Erving Goffman — one of my favorite sociologists, whose close observation of how people behave in each other's company is still some of the sharpest work on everyday interaction — drew a useful distinction here between active engagement and what he called civil inattention. That is the way people on a subway or in an elevator acknowledge one another just enough to indicate that they are both there, without demanding conversation. Both modes are forms of presence. Personalized AI, to feel like more than a search bar, will want a repertoire of them.

An assistant designed for presence, on this view, will want to be able to show that it is there without demanding interaction; to show attention without requiring it in return; and, perhaps most importantly, to demonstrate familiarity with the user — their habits, their interests, their ongoing projects — in ways that build up over time rather than asking the user to re-explain themselves at every session.

The technical work underneath

There is real engineering required to produce this kind of effect — memory systems that persist meaningfully across sessions, retrieval that picks the right details out of months of accumulated interaction, preference learning that can tell the difference between what a user finds useful and what they merely accept, and profile models that keep an evolving picture of the user without collapsing them into a stereotype. These are hard problems, and much of the personalization research happening in AI right now is concerned with them. They are, however, not only engineering problems. What counts as the right detail to recall, the right moment to recall it, and the right way to voice that recall, are experience-design questions, and they belong to designers at least as much as to ML teams.

The line between familiar and surveillant

Personalization has a design failure mode worth naming clearly, because products miss it often. An AI that knows too much, or that shows its knowledge at the wrong moments, produces the opposite of presence. It produces a sense of being watched. The distinction matters enormously. A friend remembers something you mentioned three weeks ago and brings it up when it is relevant; a surveillant system brings it up as a performance of having been watching. The gap between the two is almost entirely a matter of when the knowledge is deployed, why it is brought forward, and how it is framed. These are design decisions, and they live in the space between personalization as a feature and personalization as an experience.

Much of the difference comes down to lightness. Presence is more interesting, and more trustworthy, when it is light. A small acknowledgement that you have been here before; a moment of recognition without performance; a willingness to forget when the context has shifted. These are moves the assistant can be designed to make, and the absence of such design is often why personalization in current AI products feels either generic (the system does not know anything) or uncanny (the system knows everything and says so).

An intangible design surface

Personalization is, in design terms, a kind of affordance — a set of signals and behaviors through which a system makes itself knowable, approachable, and usable to a particular person. At the moment the affordance is mostly linguistic. An AI appears to us as a voice or a text, and we read its personality off what it says and how it says it. That is already considerable material to design for. But the trajectory is clear. AI will increasingly be embodied; it will have a face, or at least a stable visual presence, particularly in robotics. It will carry a more coherent personality across sessions and across modes. It will have memories — of itself, and of us — that accumulate and shape how it responds. It will anticipate us as well as remember us, which is itself a step change in what personalization means. As the AI moves closer in these ways, the design surface becomes correspondingly harder to work with. It is no longer a set of screens or even a set of conversational moves. It is something nearer to a relationship.

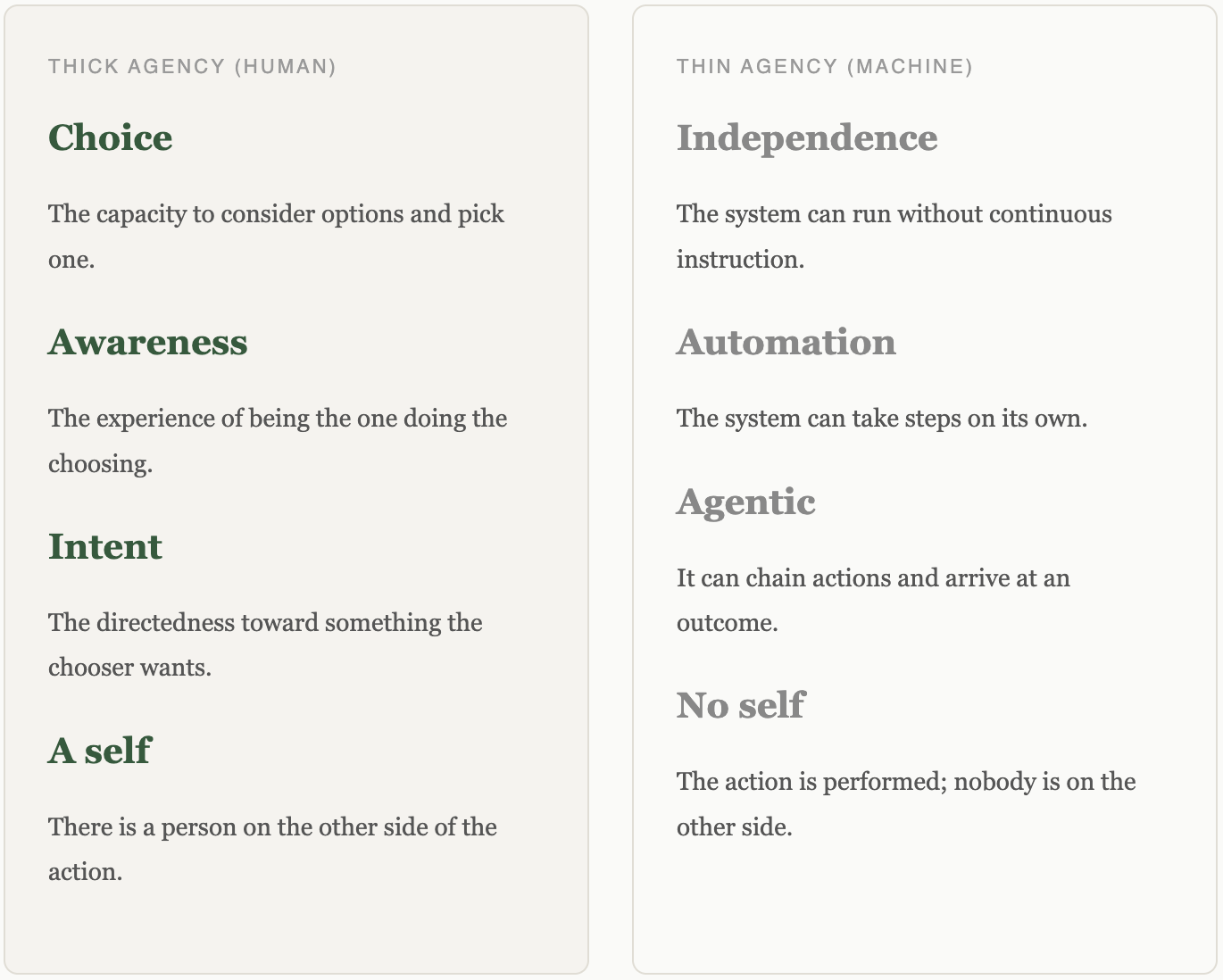

Not all of this will be welcome. Some users will find it uncanny, and some will find it inappropriate, and neither will be wrong. The more the AI can do — the more it acts autonomously, holds a persona, carries memory forward — the more each capability raises a design question that was not pressing when the model was just answering questions. The more an AI behaves like an agent, the more we question its autonomy. The more it behaves like a person, the more we question its persona. The more it behaves like an assistant, the more we question what it remembers about us and what it does with that information. These are intangible design problems, sitting on an intangible design surface, and they are some of the most consequential problems in the space. The risks around product rollout, adoption, and continued use scale with capability. The more the model can do, the more design has to do alongside it.

A social design lens

This reminds me of social interaction design: the more social a technology becomes, the greater both the risks and the opportunities for engaged user interaction. Activity streams, follows, read receipts, and profile pages were, in various ways, the earliest experiments in designing mediated presence. A few things we learned in that era translate directly to AI. Presence is more interesting when it is light. Being known is not the same as being tracked. The small signals — a nod, a read receipt, a returned greeting — do most of the work. Personalized AI picks up that thread, with language and memory rather than feeds and follow relationships doing the labor.

A further point. As AI agents begin to show up as participants on social platforms — as coworkers, actors, quasi-persons with roles and workflows — the question of how an AI manages presence will stop being a question about one user and one assistant and start being a question about agents among people. That shift is coming, and it is worth designing for now rather than later.

Personalization and interestingness

One last connection. In my Conversation Is the Medium project I have been arguing that interestingness, rather than engagement, is what AI-based interactions should aim for — the quality of drawing a user into exploration of what they did not already know. Personalization is one of the main levers for this, because the line between a generic assistant and an interesting one often runs through what the assistant happens to know. A generic model can be fluent. A model that can pick up a thread from last week and extend it somewhere the user had not yet gone can be interesting in a way that no generic model can. The design question is how to get to that kind of interestingness without straying into the territory of surveillance.

Where this leaves design

Personalization, taken seriously, is not a feature list. It is a set of design commitments about availability, attention, familiarity, and continuity — about who the assistant is to the user, how it shows up, and what it does with what it knows. Products that get this right will feel, to their users, like something is there on the other side of the exchange. Products that do not will feel either thin, because the assistant knows nothing, or strange, because it knows everything and says so. Which of those a given product becomes is mostly a design decision.

Further reading

Related pieces and projects:

- Conversational AI — the four layers of language as interface, and where design has leverage below the surface.

- Interestingness — the concept in more detail, and why it matters as a design goal for AI.

- Emotional AI — on tone, emotion, and the affective register of AI interactions.

- The Meaning Gap — on why AI “talks like it writes,” and what that implies for conversational and personalized design.

- The Ventriloquists — on how AI counterfeits the social contract of language, and what that implies for presence and voice.

- Conversation Is the Medium — an essay for ML and experience designers arguing that interestingness should be the goal of AI-human interaction.

If you are working on a personalized AI product and would like to think through the design side of it, feel free to get in touch.