Prompting is a skill, and like most skills it rewards some nuanced understanding — of how a given model responds, where it is strong and where it drifts, what holds its attention and what it quietly ignores. It also rewards keeping up, because models and system composition change often enough that what worked a few months ago often does not work now. But what interests me, as a designer, more than any of that, is that prompting is the clearest evidence I know of that language is the interface. Users of LLMs are learning, through prompting, to modify and shape their everyday language in order to steer, guide, direct, and instruct a system whose only surface is language. The skill is new; the communicative competency it rests on is the one we all already have.

Prompts are expressions, not queries

It is more useful to think of a prompt as an expression than as a query or an input. When used against research — as I use it against my own Obsidian vault — the prompt shapes not only what Claude writes but how it reads: which topic notes it reaches for, which strategies of exploration and critique it uses, whether it pauses to interrogate its own findings, what tone and register the output takes, even the format. A prompt, in this sense, is designing the model's behavior, not simply requesting an answer. That design work tends to be overlooked because the common framing treats a prompt as a one-shot question. In practice, a well-crafted prompt is closer to a brief written to a collaborator who does not know you and will not remember you afterwards, but who will, for the length of the exchange, do more or less what the brief asks for.

The design approach here is not visual, but it is organized. It has taxonomy. It is a kind of information design, in its closeness to how search evolved, and a kind of navigation, in its closeness to browsing and exploring. Instead of clicking through menus or scanning a results list, the user shapes language that shapes what the model does. The interface is language, but it is a language that users are learning to modify, to structure, and to wield as a design medium in its own right. For designers coming from a visual tradition, this is a good place to start, because it translates an unfamiliar problem (how do you design for something without a screen?) into a familiar one (how do you shape a medium to do what you need?).

The diagram below is a rough anatomy of a production prompt — the components a well-shaped prompt tends to include, and what each is doing.

Context engineering

“Prompting” as a term is in the process of being renamed. The term of art is shifting toward context engineering, which tracks something the earlier term obscured: what really shapes an LLM's behavior is not any single instruction but the full context in which generation happens — the system prompt, the retrieved documents, the conversation history, the session memory, the tools the model has access to, the things it has been told to forget. This is the same language-as-interface problem at a larger scale. What is kept, what is dropped, what is foregrounded, what quietly falls out of attention — these are all choices, and they shape the model's behavior as decisively as the words of any one prompt. For designers, this deepens the earlier point rather than replacing it. Shaping the language of an AI product now includes shaping the language environment around every generation: the architecture of memory and forgetting in which the model acts.

Prompting as reasoning

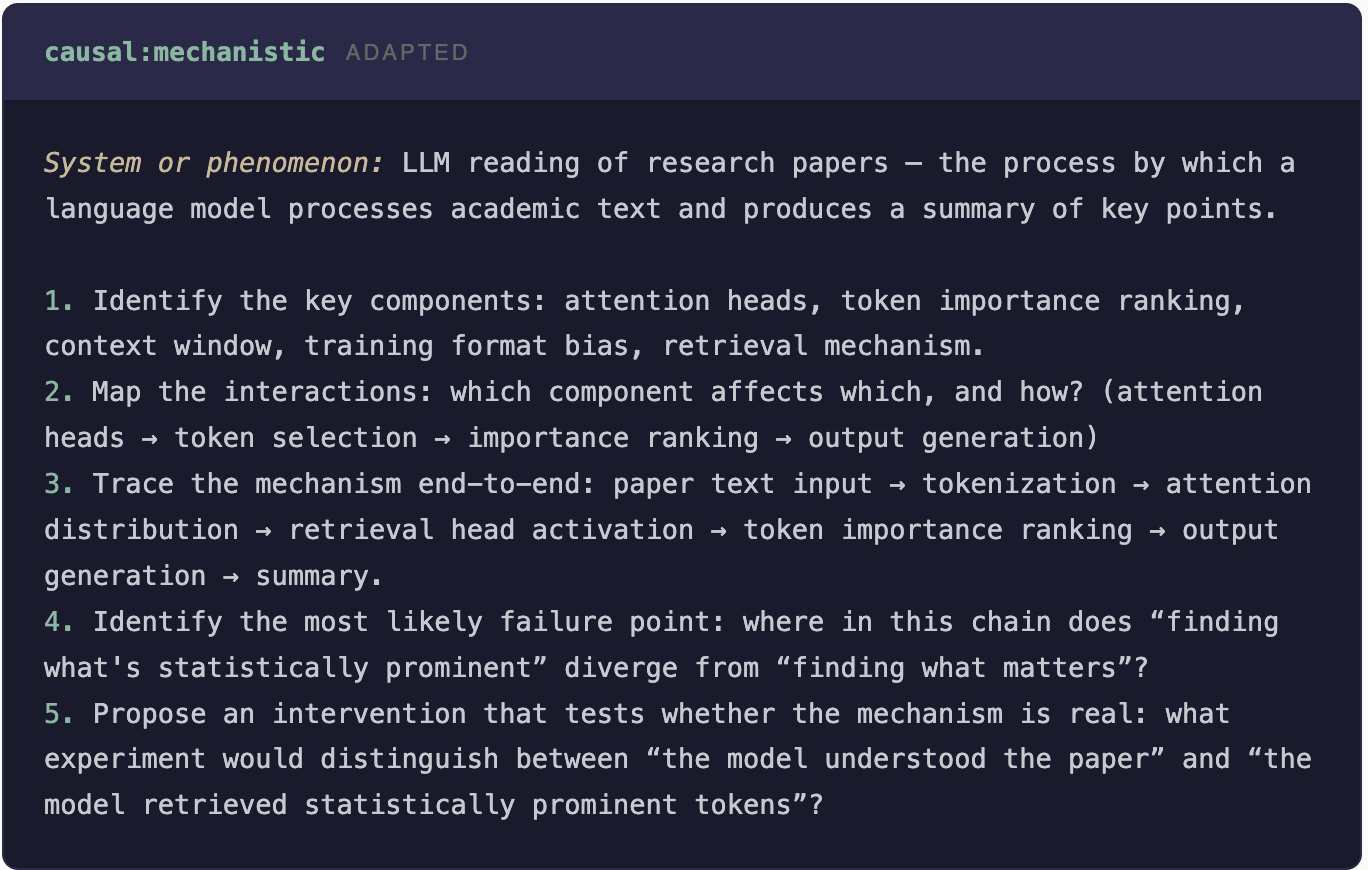

Prompts can also be reasoning, which is worth naming separately. The modular reasoning work I have been developing inside my Obsidian vault is an example. A reasoning module is a prompt designed not to ask a question but to make the model think in a particular way — causally, dialectically, analogically, diagnostically — and to return the result in a shape the next module can use. This too is design. It is the design of language that forces an LLM to organize its thinking, to plan, to strategize, to explore, to iterate, to reflect. Those are moves the raw model does not make on its own and would not make in response to a casual question. They are competencies being built on top of the core linguistic competency users already have — new skills that belong to the interaction, not to the model. Designers, researchers, and writers who take these on start to get work out of LLMs that is categorically different from what a single well-phrased question produces.

A social design lens

Not unlike my thinking with SxD when we were designing social products, prompting is a place where the interpersonal quality of the interaction shows up plainly. With AI, the interpersonal dimension has migrated into the technology itself. We are no longer only designing how people coordinate with each other through a system; we are also designing how a person coordinates with a system that performs enough of the role of an interlocutor to warrant interpersonal treatment. What you are writing in a prompt is, in effect, a message to an entity that will reply, and the shape of that message does the same kind of work that social cues have always done in mediated interaction — with the difference that the “social” is now a property of the technology rather than a separate online world we log into.

Why design has to show up for this

This is a place where the design field has a real contribution to make and is still largely underrepresented. The craft of shaping prompts, orchestrating context, and designing reasoning chains is not ML engineering. It is information design and interaction design applied to a new medium. Users are mostly learning these skills in a vacuum, because design has not yet arrived to build the vocabulary, the patterns, and the taxonomies the work needs. That gap is closing, but slowly, and I would like it to close faster.

Further reading

For readers interested in this frame, a few related pieces and projects:

- Modular Reasoning — the playbook of 123 reasoning modules I use for research and writing, and how modules chain into composable prompts.

- The Reader Who Wasn't Reading — three reasoning approaches, three self-authored prompts, three posts from the same research pool. A concrete demonstration of how prompt design shapes outputs.

- Intellectual Camouflage — a linguistic analysis of the style AI writing uses to sound expert. Approaches prompting from the critical side: what patterns does it produce, and how do readers detect them?

- Conversational AI — why language is an interface, and why prompt design is part of the larger design problem of talking with machines.

If a product you are working on depends on prompts that have to behave predictably in the hands of real users, the underlying work tends to be design work. Feel free to get in touch if it would be useful to talk through what that looks like for a specific system.